How to Solve 10is Again Level 8 Multiplying

A multiplication algorithm is an algorithm (or method) to multiply two numbers. Depending on the size of the numbers, different algorithms are used. Efficient multiplication algorithms have existed since the advent of the decimal arrangement.

Long multiplication [edit]

If a positional numeral arrangement is used, a natural style of multiplying numbers is taught in schools as long multiplication, sometimes called grade-school multiplication, sometimes chosen Standard Algorithm: multiply the multiplicand by each digit of the multiplier so add up all the properly shifted results. It requires memorization of the multiplication tabular array for single digits.

This is the usual algorithm for multiplying larger numbers by hand in base 10. A person doing long multiplication on paper will write downwards all the products and then add them together; an abacus-user will sum the products as soon as each one is computed.

Case [edit]

This example uses long multiplication to multiply 23,958,233 (multiplicand) by v,830 (multiplier) and arrives at 139,676,498,390 for the result (product).

23958233 × 5830 ——————————————— 00000000 ( = 23,958,233 × 0) 71874699 ( = 23,958,233 × xxx) 191665864 ( = 23,958,233 × 800) + 119791165 ( = 23,958,233 × five,000) ——————————————— 139676498390 ( = 139,676,498,390 )

Other notations [edit]

In some countries such as Germany, the above multiplication is depicted similarly but with the original product kept horizontal and ciphering starting with the first digit of the multiplier:[1]

23958233 · 5830 ——————————————— 119791165 191665864 71874699 00000000 ——————————————— 139676498390

Below pseudocode describes the process of to a higher place multiplication. Information technology keeps only one row to maintain the sum which finally becomes the event. Notation that the '+=' operator is used to announce sum to existing value and store operation (akin to languages such as Java and C) for firmness.

multiply ( a [ i .. p ] , b [ i .. q ] , base ) // Operands containing rightmost digits at alphabetize 1 production = [ one .. p + q ] // Allocate infinite for consequence for b_i = ane to q // for all digits in b carry = 0 for a_i = i to p // for all digits in a product [ a_i + b_i - one ] += carry + a [ a_i ] * b [ b_i ] carry = production [ a_i + b_i - 1 ] / base of operations production [ a_i + b_i - 1 ] = product [ a_i + b_i - 1 ] modernistic base product [ b_i + p ] = carry // last digit comes from last carry render production Usage in computers [edit]

Some chips implement long multiplication, in hardware or in microcode, for various integer and floating-point word sizes. In arbitrary-precision arithmetic, information technology is common to apply long multiplication with the base prepare to 2 w , where due west is the number of bits in a word, for multiplying relatively small numbers. To multiply 2 numbers with n digits using this method, one needs about n two operations. More formally, multiplying ii n-digit numbers using long multiplication requires Θ(n 2) single-digit operations (additions and multiplications).

When implemented in software, long multiplication algorithms must bargain with overflow during additions, which can be expensive. A typical solution is to represent the number in a small base, b, such that, for example, viiib is a representable motorcar integer. Several additions can so be performed before an overflow occurs. When the number becomes too large, we add part of it to the outcome, or we bear and map the remaining part back to a number that is less than b. This procedure is called normalization. Richard Brent used this approach in his Fortran package, MP.[2]

Computers initially used a very similar algorithm to long multiplication in base 2, but mod processors accept optimized circuitry for fast multiplications using more than efficient algorithms, at the toll of a more than complex hardware realization.[ citation needed ] In base two, long multiplication is sometimes called "shift and add together", because the algorithm simplifies and merely consists of shifting left (multiplying by powers of two) and adding. Virtually currently available microprocessors implement this or other like algorithms (such as Booth encoding) for various integer and floating-point sizes in hardware multipliers or in microcode.[ citation needed ]

On currently available processors, a chip-wise shift instruction is faster than a multiply instruction and can be used to multiply (shift left) and divide (shift right) past powers of 2. Multiplication by a constant and partition by a constant can be implemented using a sequence of shifts and adds or subtracts. For example, there are several ways to multiply by ten using only bit-shift and addition.

((x << 2) + x) << 1 # Here x*x is computed as (10*2^2 + 10)*2 (ten << 3) + (x << i) # Hither 10*x is computed as x*ii^3 + x*2

In some cases such sequences of shifts and adds or subtracts volition outperform hardware multipliers and especially dividers. A sectionalization past a number of the form or frequently can be converted to such a short sequence.

Algorithms for multiplying by hand [edit]

In addition to the standard long multiplication, there are several other methods used to perform multiplication by paw. Such algorithms may exist devised for speed, ease of calculation, or educational value, specially when computers or multiplication tables are unavailable.

Grid method [edit]

The grid method (or box method) is an introductory method for multiple-digit multiplication that is often taught to pupils at primary school or elementary school. It has been a standard office of the national main school mathematics curriculum in England and Wales since the belatedly 1990s.[3]

Both factors are broken up ("partitioned") into their hundreds, tens and units parts, and the products of the parts are and then calculated explicitly in a relatively unproblematic multiplication-only stage, before these contributions are then totalled to requite the final respond in a separate addition stage.

The adding 34 × 13, for instance, could be computed using the filigree:

| × | thirty | iv |

|---|---|---|

| 10 | 300 | forty |

| 3 | 90 | 12 |

followed by addition to obtain 442, either in a single sum (see right), or through forming the row-by-row totals (300 + xl) + (xc + 12) = 340 + 102 = 442.

This adding approach (though not necessarily with the explicit grid arrangement) is too known as the partial products algorithm. Its essence is the calculation of the elementary multiplications separately, with all add-on being left to the terminal gathering-up stage.

The grid method can in principle be applied to factors of any size, although the number of sub-products becomes cumbersome as the number of digits increases. Withal, it is seen as a usefully explicit method to introduce the idea of multiple-digit multiplications; and, in an historic period when about multiplication calculations are done using a calculator or a spreadsheet, it may in do be the only multiplication algorithm that some students will ever need.

Lattice multiplication [edit]

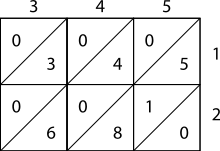

Starting time, fix up the grid by marking its rows and columns with the numbers to be multiplied. Then, fill in the boxes with tens digits in the top triangles and units digits on the lesser.

Finally, sum along the diagonal tracts and behave equally needed to go the respond

Lattice, or sieve, multiplication is algorithmically equivalent to long multiplication. Information technology requires the preparation of a lattice (a grid drawn on newspaper) which guides the calculation and separates all the multiplications from the additions. It was introduced to Europe in 1202 in Fibonacci'south Liber Abaci. Fibonacci described the operation equally mental, using his right and left hands to bear the intermediate calculations. Matrakçı Nasuh presented 6 different variants of this method in this 16th-century book, Umdet-ul Hisab. It was widely used in Enderun schools across the Ottoman Empire.[4] Napier's basic, or Napier'southward rods also used this method, as published by Napier in 1617, the year of his death.

As shown in the instance, the multiplicand and multiplier are written above and to the correct of a lattice, or a sieve. It is institute in Muhammad ibn Musa al-Khwarizmi's "Arithmetic", one of Leonardo'southward sources mentioned by Sigler, author of "Fibonacci'southward Liber Abaci", 2002.[ citation needed ]

- During the multiplication phase, the lattice is filled in with 2-digit products of the corresponding digits labeling each row and cavalcade: the tens digit goes in the top-left corner.

- During the addition phase, the lattice is summed on the diagonals.

- Finally, if a carry phase is necessary, the answer as shown along the left and bottom sides of the lattice is converted to normal form by carrying ten's digits as in long improver or multiplication.

Case [edit]

The pictures on the right prove how to calculate 345 × 12 using lattice multiplication. As a more complicated example, consider the moving picture beneath displaying the computation of 23,958,233 multiplied past 5,830 (multiplier); the result is 139,676,498,390. Notice 23,958,233 is along the top of the lattice and 5,830 is along the right side. The products fill the lattice and the sum of those products (on the diagonal) are along the left and lesser sides. Then those sums are totaled every bit shown.

2 three 9 v 8 2 3 3 +---+---+---+---+---+---+---+---+- |i /|1 /|four /|two /|4 /|ane /|one /|ane /| | / | / | / | / | / | / | / | / | 5 01|/ 0|/ 5|/ 5|/ v|/ 0|/ 0|/ 5|/ 5| +---+---+---+---+---+---+---+---+- |1 /|2 /|7 /|4 /|6 /|1 /|two /|2 /| | / | / | / | / | / | / | / | / | 8 02|/ half dozen|/ 4|/ 2|/ 0|/ 4|/ 6|/ 4|/ 4| +---+---+---+---+---+---+---+---+- |0 /|0 /|two /|1 /|2 /|0 /|0 /|0 /| | / | / | / | / | / | / | / | / | 3 17|/ half-dozen|/ 9|/ vii|/ v|/ 4|/ six|/ nine|/ 9| +---+---+---+---+---+---+---+---+- |0 /|0 /|0 /|0 /|0 /|0 /|0 /|0 /| | / | / | / | / | / | / | / | / | 0 24|/ 0|/ 0|/ 0|/ 0|/ 0|/ 0|/ 0|/ 0| +---+---+---+---+---+---+---+---+- 26 fifteen 13 18 17 13 09 00 | 01 002 0017 00024 000026 0000015 00000013 000000018 0000000017 00000000013 000000000009 0000000000000 ————————————— 139676498390 |

= 139,676,498,390 |

Russian peasant multiplication [edit]

The binary method is as well known equally peasant multiplication, because information technology has been widely used by people who are classified equally peasants and thus accept not memorized the multiplication tables required for long multiplication.[5] [ failed verification ] The algorithm was in employ in ancient Egypt.[6] [vii] Its primary advantages are that it tin can be taught quickly, requires no memorization, and can exist performed using tokens, such as poker chips, if paper and pencil aren't available. The disadvantage is that it takes more steps than long multiplication, so it can be unwieldy for big numbers.

Description [edit]

On paper, write down in one column the numbers you get when you repeatedly halve the multiplier, ignoring the rest; in a column beside it repeatedly double the multiplicand. Cross out each row in which the last digit of the first number is fifty-fifty, and add the remaining numbers in the second column to obtain the production.

Examples [edit]

This example uses peasant multiplication to multiply 11 by 3 to arrive at a result of 33.

Decimal: Binary: 11 3 1011 eleven 5 6 101 110 212101100ane 24 1 11000 —— —————— 33 100001

Describing the steps explicitly:

- 11 and 3 are written at the top

- eleven is halved (five.5) and iii is doubled (6). The fractional portion is discarded (five.five becomes 5).

- 5 is halved (2.5) and 6 is doubled (12). The fractional portion is discarded (2.5 becomes ii). The figure in the left column (2) is fifty-fifty, and so the figure in the right column (12) is discarded.

- 2 is halved (i) and 12 is doubled (24).

- All non-scratched-out values are summed: 3 + half dozen + 24 = 33.

The method works considering multiplication is distributive, so:

A more complicated example, using the figures from the earlier examples (23,958,233 and 5,830):

Decimal: Binary: 583023958233101101100011010110110110010010110110012915 47916466 101101100011 10110110110010010110110010 1457 95832932 10110110001 101101101100100101101100100 72819166586410110110001011011011001001011011001000364383331728101101100101101101100100101101100100001827666634561011011010110110110010010110110010000091 1533326912 1011011 1011011011001001011011001000000 45 3066653824 101101 10110110110010010110110010000000 2261333076481011010110110110010010110110010000000011 12266615296 1011 1011011011001001011011001000000000 v 24533230592 101 10110110110010010110110010000000000 249066461184101011011011001001011011001000000000001 98132922368 1 1011011011001001011011001000000000000 ———————————— 1022143253354344244353353243222210110 (before comport) 139676498390 10000010000101010111100011100111010110

Quarter square multiplication [edit]

Two quantities can be multiplied using quarter squares by employing the post-obit identity involving the floor function that some sources[viii] [ix] attribute to Babylonian mathematics (2000–1600 BC).

If one of ten+y and 10−y is odd, the other is odd besides, thus their squares are 1 mod iv, and so taking floor reduces both by a quarter; the subtraction then cancels the quarters out, and discarding the remainders does not introduce whatever difference comparing with the same expression without the floor functions. Below is a lookup tabular array of quarter squares with the remainder discarded for the digits 0 through 18; this allows for the multiplication of numbers upwardly to 9×9.

| n | 0 | i | 2 | 3 | four | 5 | vi | 7 | 8 | 9 | ten | 11 | 12 | 13 | 14 | xv | sixteen | 17 | 18 |

| ⌊north 2/4⌋ | 0 | 0 | one | 2 | 4 | 6 | 9 | 12 | 16 | 20 | 25 | 30 | 36 | 42 | 49 | 56 | 64 | 72 | 81 |

If, for example, you lot wanted to multiply 9 by 3, yous observe that the sum and difference are 12 and 6 respectively. Looking both those values upwards on the table yields 36 and 9, the difference of which is 27, which is the product of nine and 3.

Antoine Voisin published a tabular array of quarter squares from 1 to k in 1817 equally an aid in multiplication. A larger table of quarter squares from 1 to 100000 was published by Samuel Laundy in 1856,[ten] and a table from 1 to 200000 past Joseph Blater in 1888.[eleven]

Quarter square multipliers were used in analog computers to course an analog signal that was the product of two analog input signals. In this application, the sum and divergence of two input voltages are formed using operational amplifiers. The foursquare of each of these is approximated using piecewise linear circuits. Finally the difference of the 2 squares is formed and scaled by a factor of ane fourth using yet another operational amplifier.

In 1980, Everett 50. Johnson proposed using the quarter square method in a digital multiplier.[12] To form the production of two viii-scrap integers, for instance, the digital device forms the sum and difference, looks both quantities up in a table of squares, takes the difference of the results, and divides by 4 past shifting ii bits to the right. For 8-fleck integers the table of quarter squares will have 29-one=511 entries (i entry for the total range 0..510 of possible sums, the differences using only the first 256 entries in range 0..255) or 29-ane=511 entries (using for negative differences the technique of ii-complements and 9-bit masking, which avoids testing the sign of differences), each entry beingness 16-bit wide (the entry values are from (0²/4)=0 to (510²/4)=65025).

The quarter foursquare multiplier technique has also benefitted eight-flake systems that practice not take any back up for a hardware multiplier. Charles Putney implemented this for the 6502.[13]

Computational complexity of multiplication [edit]

Unsolved problem in information science:

What is the fastest algorithm for multiplication of two -digit numbers?

Karatsuba multiplication [edit]

For systems that need to multiply numbers in the range of several thousand digits, such as computer algebra systems and bignum libraries, long multiplication is too slow. These systems may use Karatsuba multiplication, which was discovered in 1960 (published in 1962). The eye of Karatsuba's method lies in the observation that two-digit multiplication can be done with only three rather than the iv multiplications classically required. This is an instance of what is now called a carve up-and-conquer algorithm. Suppose we want to multiply two 2-digit base-yard numbers: x 1 m + x 2 and y 1 grand + y two:

- compute x 1 · y 1, phone call the result F

- compute x 2 · y 2, phone call the result G

- compute (ten ane + 10 2) · (y 1 + y 2), call the result H

- compute H − F − M, call the result Chiliad; this number is equal to x ane · y 2 + x ii · y 1

- compute F · m 2 + G · m + Chiliad.

To compute these three products of base m numbers, nosotros tin utilise the aforementioned trick again, effectively using recursion. In one case the numbers are computed, we need to add them together (steps four and 5), which takes nigh north operations.

Karatsuba multiplication has a time complexity of O(n log2iii) ≈ O(due north 1.585), making this method significantly faster than long multiplication. Because of the overhead of recursion, Karatsuba's multiplication is slower than long multiplication for pocket-size values of n; typical implementations therefore switch to long multiplication for small values of north.

Karatsuba's algorithm was the first known algorithm for multiplication that is asymptotically faster than long multiplication,[14] and can thus be viewed equally the starting signal for the theory of fast multiplications.

Toom–Melt [edit]

Another method of multiplication is called Toom–Cook or Toom-3. The Toom–Cook method splits each number to be multiplied into multiple parts. The Toom–Cook method is ane of the generalizations of the Karatsuba method. A three-way Toom–Cook can do a size-3N multiplication for the cost of five size-North multiplications. This accelerates the performance by a cistron of 9/5, while the Karatsuba method accelerates information technology by 4/3.

Although using more and more parts tin reduce the time spent on recursive multiplications farther, the overhead from additions and digit management likewise grows. For this reason, the method of Fourier transforms is typically faster for numbers with several grand digits, and asymptotically faster for fifty-fifty larger numbers.

Fourier transform method [edit]

Demonstration of multiplying 1234 × 5678 = 7006652 using fast Fourier transforms (FFTs). Number-theoretic transforms in the integers modulo 337 are used, selecting 85 as an 8th root of unity. Base x is used in place of base 2 w for illustrative purposes.

The bones idea due to Strassen (1968) is to use fast polynomial multiplication to perform fast integer multiplication. The algorithm was made practical and theoretical guarantees were provided in 1971 by Schönhage and Strassen resulting in the Schönhage–Strassen algorithm.[15] The details are the post-obit: We choose the largest integer west that will not cause overflow during the procedure outlined below. So we split up the two numbers into m groups of w bits equally follows

We await at these numbers equally polynomials in x, where ten = ii w , to become,

And so we tin say that,

Clearly the to a higher place setting is realized past polynomial multiplication, of two polynomials a and b. The crucial step now is to utilise Fast Fourier multiplication of polynomials to realize the multiplications in a higher place faster than in naive O(thou ii) fourth dimension.

To remain in the modular setting of Fourier transforms, we look for a band with a (iim)th root of unity. Hence we do multiplication modulo N (and thus in the Z/NZ ring). Further, Due north must be chosen so that there is no 'wrap around', substantially, no reductions modulo North occur. Thus, the pick of N is crucial. For example, it could be done as,

The band Z/NZ would thus have a (twok)th root of unity, namely 8. Also, it can exist checked that ck < N, and thus no wrap around will occur.

The algorithm has a time complication of Θ(north log(n) log(log(north))) and is used in do for numbers with more than 10,000 to 40,000 decimal digits. In 2007 this was improved past Martin Fürer (Fürer'due south algorithm)[16] to give a time complexity of northward log(northward) twoΘ(log*(n)) using Fourier transforms over complex numbers. Anindya De, Chandan Saha, Piyush Kurur and Ramprasad Saptharishi[17] gave a like algorithm using modular arithmetic in 2008 achieving the same running time. In context of the above material, what these latter authors have accomplished is to notice N much less than twoiiigrand + i, so that Z/NZ has a (2m)th root of unity. This speeds upwardly computation and reduces the fourth dimension complexity. However, these latter algorithms are just faster than Schönhage–Strassen for impractically big inputs.

In March 2019, David Harvey and Joris van der Hoeven appear their discovery of an O(north log due north) multiplication algorithm.[18] It was published in the Annals of Mathematics in 2021.[19]

Using number-theoretic transforms instead of detached Fourier transforms avoids rounding fault problems by using modular arithmetic instead of floating-point arithmetic. In guild to apply the factoring which enables the FFT to work, the length of the transform must exist factorable to minor primes and must be a factor of N − 1, where Due north is the field size. In particular, calculation using a Galois field GF(thou two), where one thousand is a Mersenne prime number, allows the use of a transform sized to a power of 2; east.g. 1000 = 231 − 1 supports transform sizes up to two32.

Lower bounds [edit]

There is a piddling lower bound of Ω(north) for multiplying two n-chip numbers on a single processor; no matching algorithm (on conventional machines, that is on Turing equivalent machines) nor any sharper lower bound is known. Multiplication lies outside of Air-conditioning0[p] for any prime p, meaning there is no family unit of constant-depth, polynomial (or even subexponential) size circuits using AND, OR, Non, and Modern p gates that can compute a product. This follows from a constant-depth reduction of Modernistic q to multiplication.[20] Lower bounds for multiplication are also known for some classes of branching programs.[21]

Circuitous number multiplication [edit]

Complex multiplication usually involves 4 multiplications and 2 additions.

Or

Every bit observed by Peter Ungar in 1963, one can reduce the number of multiplications to three, using essentially the same computation as Karatsuba'southward algorithm.[22] The production (a +bi) · (c +di) can be calculated in the following way.

- k 1 = c · (a + b)

- grand 2 = a · (d − c)

- k iii = b · (c + d)

- Existent office = 1000 1 − k 3

- Imaginary function = thousand ane + k 2.

This algorithm uses only 3 multiplications, rather than four, and five additions or subtractions rather than ii. If a multiply is more expensive than three adds or subtracts, as when calculating by hand, then there is a gain in speed. On modern computers a multiply and an add can take virtually the aforementioned fourth dimension then in that location may be no speed proceeds. There is a merchandise-off in that there may exist some loss of precision when using floating bespeak.

For fast Fourier transforms (FFTs) (or any linear transformation) the complex multiplies are past constant coefficients c +di (chosen twiddle factors in FFTs), in which instance two of the additions (d−c and c+d) can exist precomputed. Hence, only 3 multiplies and three adds are required.[23] Withal, trading off a multiplication for an addition in this way may no longer be beneficial with modern floating-betoken units.[24]

Polynomial multiplication [edit]

All the to a higher place multiplication algorithms can also be expanded to multiply polynomials. For instance the Strassen algorithm may be used for polynomial multiplication[25] Alternatively the Kronecker substitution technique may exist used to convert the problem of multiplying polynomials into a single binary multiplication.[26]

Long multiplication methods can exist generalised to permit the multiplication of algebraic formulae:

14ac - 3ab + 2 multiplied by ac - ab + 1

14ac -3ab 2 ac -ab 1 ———————————————————— 14a2c2 -3a2bc 2ac -14a2bc 3 a2b2 -2ab 14ac -3ab 2 ——————————————————————————————————————— 14atwoc2 -17atwobc 16ac 3a2b2 -5ab +two =======================================[27]

As a further example of column based multiplication, consider multiplying 23 long tons (t), 12 hundredweight (cwt) and 2 quarters (qtr) past 47. This example uses avoirdupois measures: 1 t = 20 cwt, i cwt = iv qtr.

t cwt qtr 23 12 2 47 x ———————————————— 141 94 94 940 470 29 23 ———————————————— 1110 587 94 ———————————————— 1110 7 ii ================= Answer: 1110 ton vii cwt ii qtr

Outset multiply the quarters by 47, the result 94 is written into the first workspace. Adjacent, multiply cwt 12*47 = (two + 10)*47 but don't add together upwards the fractional results (94, 470) withal. Likewise multiply 23 by 47 yielding (141, 940). The quarters column is totaled and the result placed in the second workspace (a fiddling movement in this instance). 94 quarters is 23 cwt and ii qtr, so identify the two in the answer and put the 23 in the adjacent column left. At present add up the 3 entries in the cwt column giving 587. This is 29 t 7 cwt, then write the seven into the reply and the 29 in the cavalcade to the left. At present add up the tons column. At that place is no adjustment to brand, so the result is merely copied downward.

The same layout and methods tin exist used for whatsoever traditional measurements and not-decimal currencies such as the erstwhile British £sd system.

Run across also [edit]

- Binary multiplier

- Segmentation algorithm

- Logarithm

- Mental calculation

- Prosthaphaeresis

- Slide rule

- Trachtenberg system

- Horner scheme for evaluating of a polynomial

- Residue number system § Multiplication for another fast multiplication algorithm, specially efficient when many operations are washed in sequence, such as in linear algebra

- Dadda multiplier

- Wallace tree

References [edit]

- ^ "Multiplication". www.mathematische-basteleien.de . Retrieved 2022-03-15 .

- ^ Brent, Richard P (March 1978). "A Fortran Multiple-Precision Arithmetic Package". ACM Transactions on Mathematical Software. 4: 57–70. CiteSeerXten.one.1.117.8425. doi:10.1145/355769.355775. S2CID 8875817.

- ^ Gary Eason, Back to schoolhouse for parents, BBC News, 13 February 2000

Rob Eastaway, Why parents tin't do maths today, BBC News, 10 September 2010 - ^ Corlu, M. S., Burlbaw, 50. M., Capraro, R. M., Corlu, Thousand. A.,& Han, S. (2010). The Ottoman Palace School Enderun and The Man with Multiple Talents, Matrakçı Nasuh. Journal of the Korea Society of Mathematical Education Series D: Research in Mathematical Educational activity. fourteen(1), pp. 19–31.

- ^ Bogomolny, Alexander. "Peasant Multiplication". www.cut-the-knot.org . Retrieved 2017-xi-04 .

- ^ D. Wells (1987). The Penguin Lexicon of Curious and Interesting Numbers. Penguin Books. p. 44.

- ^ Cool Multiplication Math Trick, archived from the original on 2021-12-11, retrieved 2020-03-14

- ^ McFarland, David (2007), Quarter Tables Revisited: Earlier Tables, Partition of Labor in Tabular array Construction, and Later Implementations in Analog Computers, p. 1

- ^ Robson, Eleanor (2008). Mathematics in Ancient Iraq: A Social History. p. 227. ISBN978-0691091822.

- ^ "Reviews", The Civil Engineer and Architect's Journal: 54–55, 1857.

- ^ Holmes, Neville (2003), "Multiplying with quarter squares", The Mathematical Gazette, 87 (509): 296–299, doi:10.1017/S0025557200172778, JSTOR 3621048, S2CID 125040256.

- ^ Everett Fifty., Johnson (March 1980), "A Digital Quarter Square Multiplier", IEEE Transactions on Computers, Washington, DC, USA: IEEE Computer Society, vol. C-29, no. 3, pp. 258–261, doi:10.1109/TC.1980.1675558, ISSN 0018-9340, S2CID 24813486

- ^ Putney, Charles (Mar 1986), "Fastest 6502 Multiplication However", Apple Assembly Line, vol. 6, no. six

- ^ D. Knuth, The Art of Calculator Programming, vol. 2, sec. four.iii.3 (1998)

- ^ A. Schönhage and V. Strassen, "Schnelle Multiplikation großer Zahlen", Calculating 7 (1971), pp. 281–292.

- ^ Fürer, M. (2007). "Faster Integer Multiplication" in Proceedings of the thirty-ninth almanac ACM symposium on Theory of computing, June xi–13, 2007, San Diego, California, USA

- ^ Anindya De, Piyush P Kurur, Chandan Saha, Ramprasad Saptharishi. Fast Integer Multiplication Using Modular Arithmetic. Symposium on Theory of Computation (STOC) 2008.

- ^ Hartnett, Kevin (2019-04-xi). "Mathematicians Discover the Perfect Fashion to Multiply". Quanta Magazine . Retrieved 2019-05-03 .

- ^ Harvey, David; van der Hoeven, Joris (2021). "Integer multiplication in time ". Annals of Mathematics. Second Series. 193 (2): 563–617. doi:10.4007/register.2021.193.2.4. MR 4224716.

- ^ Sanjeev Arora and Boaz Barak, Computational Complexity: A Modern Arroyo, Cambridge University Press, 2009.

- ^ Farid Ablayev and Marek Karpinski, A lower jump for integer multiplication on randomized ordered read-one time branching programs, Information and Ciphering 186 (2003), 78–89.

- ^ Knuth, Donald E. (1988), The Art of Figurer Programming volume 2: Seminumerical algorithms, Addison-Wesley, pp. 519, 706

- ^ P. Duhamel and M. Vetterli, Fast Fourier transforms: A tutorial review and a country of the art" Archived 2014-05-29 at the Wayback Machine, Signal Processing vol. 19, pp. 259–299 (1990), section iv.1.

- ^ S. G. Johnson and M. Frigo, "A modified split-radix FFT with fewer arithmetic operations," IEEE Trans. Bespeak Procedure. vol. 55, pp. 111–119 (2007), department IV.

- ^ "Strassen algorithm for polynomial multiplication". Everything2.

- ^ von zur Gathen, Joachim; Gerhard, Jürgen (1999), Modern Computer Algebra, Cambridge University Press, pp. 243–244, ISBN978-0-521-64176-0 .

- ^ Castle, Frank (1900). Workshop Mathematics. London: MacMillan and Co. p. 74.

Further reading [edit]

- Warren Jr., Henry S. (2013). Hacker'south Delight (2 ed.). Addison Wesley - Pearson Didactics, Inc. ISBN978-0-321-84268-eight.

- Savard, John J. Thousand. (2018) [2006]. "Advanced Arithmetic Techniques". quadibloc. Archived from the original on 2018-07-03. Retrieved 2018-07-sixteen .

- Johansson, Kenny (2008). Depression Power and Low Complication Shift-and-Add Based Computations (PDF) (Dissertation thesis). Linköping Studies in Science and Technology (1 ed.). Linköping, Sweden: Department of Electrical Engineering, Linköping University. ISBN978-91-7393-836-5. ISSN 0345-7524. No. 1201. Archived (PDF) from the original on 2017-08-13. Retrieved 2021-08-23 . (x+268 pages)

External links [edit]

Basic arithmetic [edit]

- The Many Ways of Arithmetics in UCSMP Everyday Mathematics

- A Powerpoint presentation about aboriginal mathematics

- Lattice Multiplication Flash Video

Avant-garde algorithms [edit]

- Multiplication Algorithms used by GMP

Source: https://en.wikipedia.org/wiki/Multiplication_algorithm

0 Response to "How to Solve 10is Again Level 8 Multiplying"

Post a Comment